-

There are two main types of noise in fluorescence microscopy: photon noise & read noise

-

Photon noise is signal-dependent, varying throughout an image

-

Read noise is signal-independent, and depends upon the detector

-

Detecting more photons reduces the impact of both noise types

Noise

Introduction

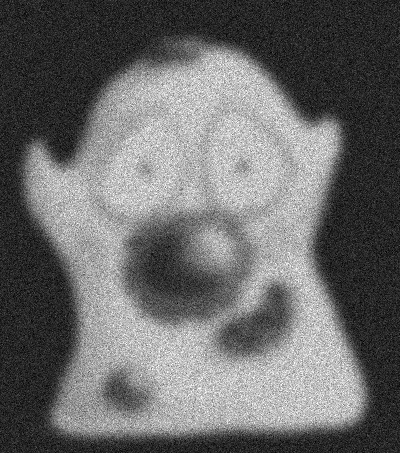

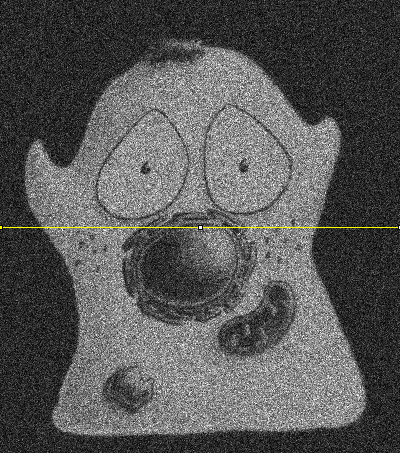

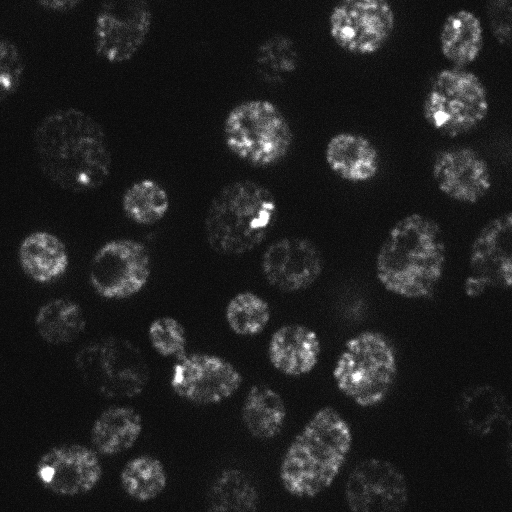

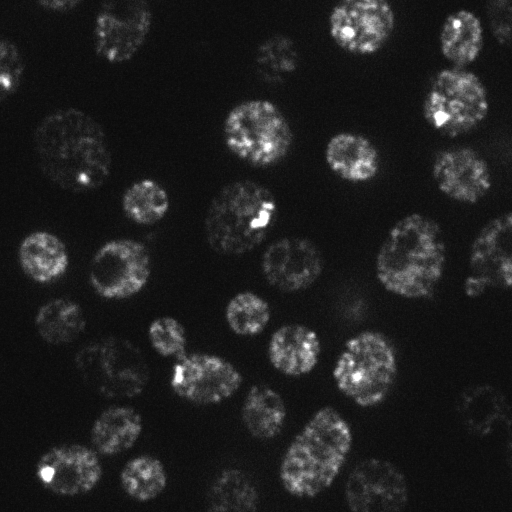

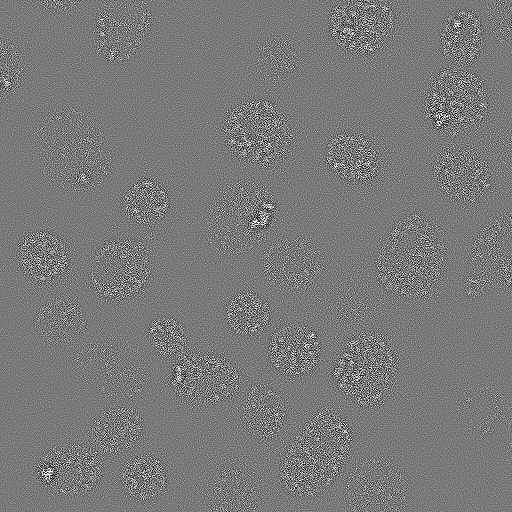

We could reasonably expect that a noise-free microscopy image should look pleasantly smooth, not least because the convolution with the PSF has a blurring effect that softens any sharp transitions. Yet in practice raw fluorescence microscopy images are not smooth. They are always, to a greater or lesser extent, corrupted by noise. This appears as a random 'graininess' throughout the image, which is often so strong as to obscure details.

This chapter considers the nature of the noisiness, where it comes from, and what can be done about it. Before starting, it may be helpful to know the one major lesson of this chapter for the working microscopist is simply:

This general guidance applies in the overwhelming majority of cases when a good quality microscope is functioning properly. Nevertheless, it may be helpful to know a bit more detail about why – and what you might do if detecting more photons is not feasible.

Background

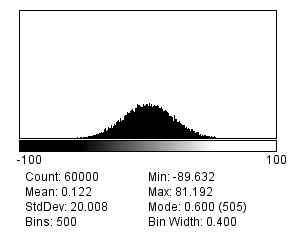

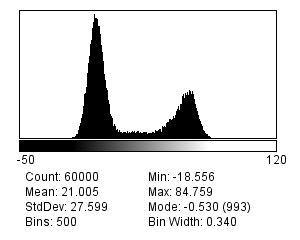

In general, we can assume that noise in fluorescence microscopy images has the following three characteristics, illustrated in Figure 1:

-

Noise is random – For any pixel, the noise is a random positive or negative number added to the 'true value' the pixel should have.

-

Noise is independent at each pixel – The value of the noise at any pixel does not depend upon where the pixel is, or what the noise is at any other pixel.

-

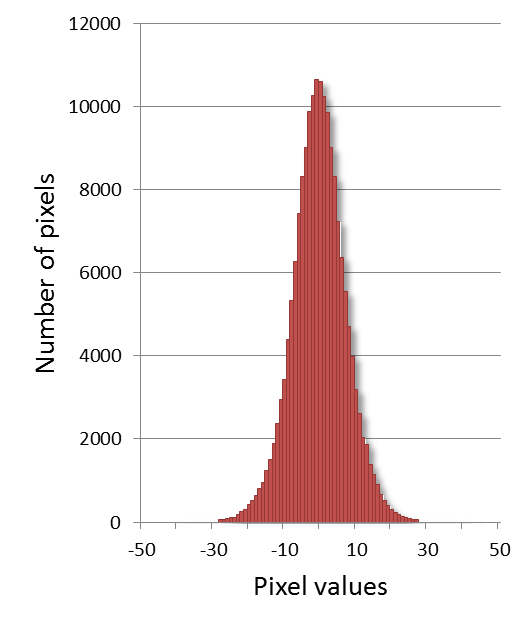

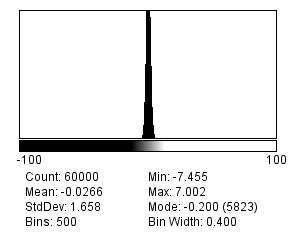

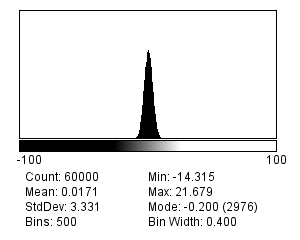

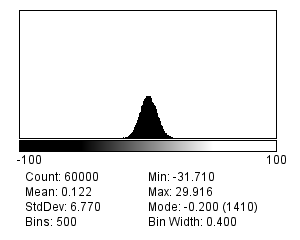

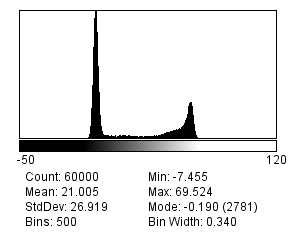

Noise follows a particular distribution – Each noise value can be seen as a random variable drawn from a particular distribution. If we have enough noise values, their histogram would resemble a plot of the distribution[1].

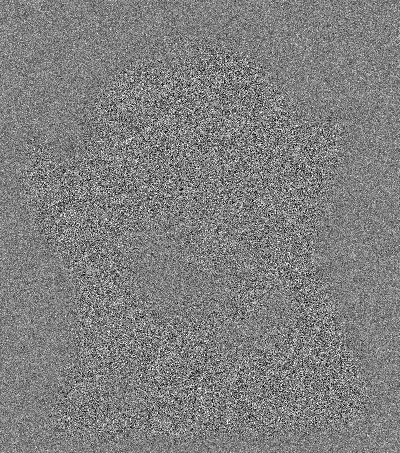

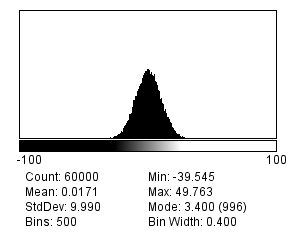

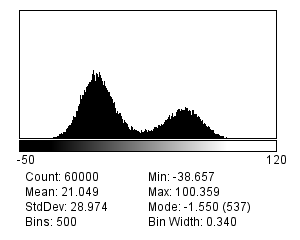

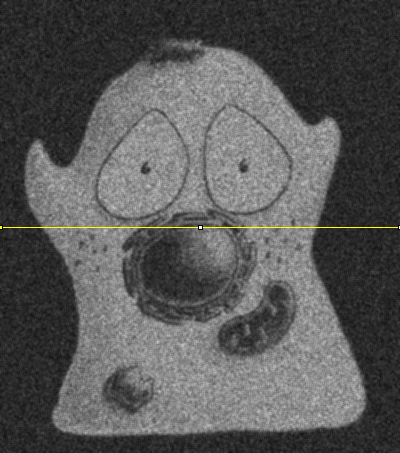

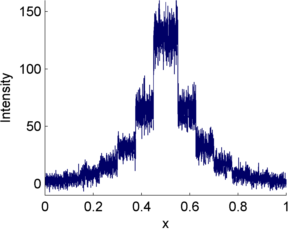

There are many different possible noise distributions, but we only need to consider the Poisson and Gaussian cases. No matter which of these we have, the most interesting distribution parameter for us is the standard deviation. Assuming everything else stays the same, if the standard deviation of the noise is higher then the image looks worse (Figure 2).

The reason we will consider two distributions is that there are two main types of noise for us to worry about:

-

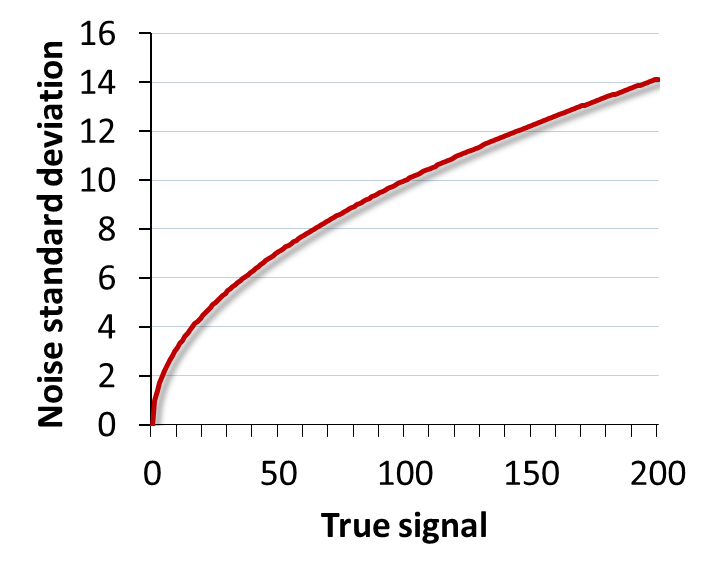

Photon noise, from the emission (and detection) of the light itself. This follows a Poisson distribution, for which the standard deviation changes with the local image brightness.

-

Read noise, arising from inaccuracies in quantifying numbers of detected photons. This follows a Gaussian distribution, for which the standard deviation stays the same throughout the image.

Therefore the noise in the image is really the result of adding two[2] separate random components together. In other words, to get the value of any pixel you need to calculate the sum of the 'true' (noise-free) value , a random photon noise value , and a random read noise value , i.e.

Finally, some useful maths: suppose we add two random noisy values together. Both are independent and drawn from distributions (Gaussian or Poisson) with standard deviations and . The result is a third random value, drawn from a distribution with a standard deviation . On the other hand, if we multiply a noisy value from a distribution with a standard deviation by , the result is noise from a distribution with a standard deviation .

These are all my most important noise facts, upon which the rest of this chapter is built. We will begin with Gaussian noise because it is easier to work with, found in many applications, and widely studied in the image processing literature. However, in most fluorescence images photon noise is the more important factor.

Gaussian noise

Gaussian noise is a common problem in fluorescence images acquired using a CCD camera (see Microscopes & detectors). It arises at the stage of quantifying the number of photons detected for each pixel. Quantifying photons is hard to do with complete precision, and the result is likely to be wrong by at least a few photons. This error is the read noise.

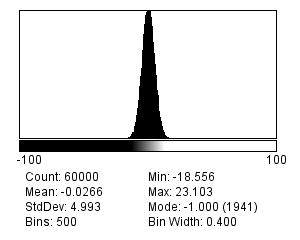

Read noise typically follows a Gaussian distribution and has a mean of zero: this implies there is an equal likelihood of over or underestimating the number of photons. Furthermore, according to the properties of Gaussian distributions, we should expect around ~68% of measurements to be ±1 standard deviation from the true, read-noise-free value. If a detector has a low read noise standard deviation, this is then a good thing: it means the error should be small.

Signal-to-Noise Ratio (SNR)

Read noise is said to be signal independent: its standard deviation is constant, and does not depend upon how many photons are being quantified. However, the extent to which read noise is a problem probably does depend upon the number of photons. For example, if we have detected 20 photons, a noise standard deviation of 10 photons is huge; if we have detected 10 000 photons, it is likely not so important.

A better way to assess the noisiness of an image is then the ratio of the interesting part of each pixel (called the signal, which is here what we would ideally detect in terms of photons) to the noise standard deviation, which together is known as the Signal-to-Noise Ratio[3]:

Gaussian noise simulations

I find the best way to learn about noise is by creating simulation images, and exploring their properties through making and testing predictions. will add Gaussian noise with a standard deviation of your choosing to any image. If you apply this to an empty 32-bit image created using you can see noise on its own.

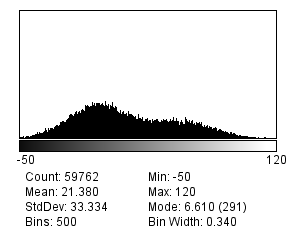

Averaging noise

At the beginning of this chapter it was stated how to calculate the new standard deviation if noisy pixels are added together (i.e. it is the square root of the sum of the original variances). If the original standard deviations are the same, the result is always something higher. But if the pixels are averaged, then the resulting noise standard deviation is lower.

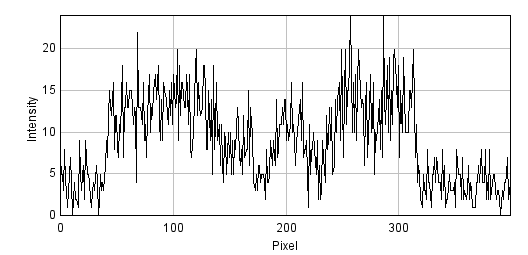

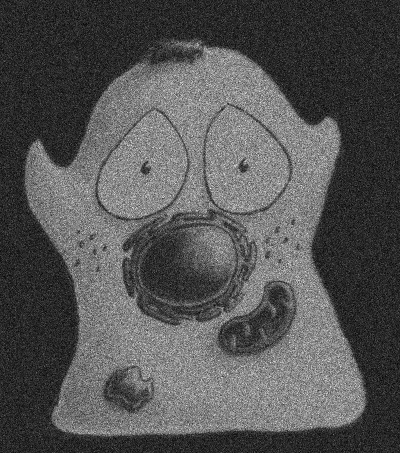

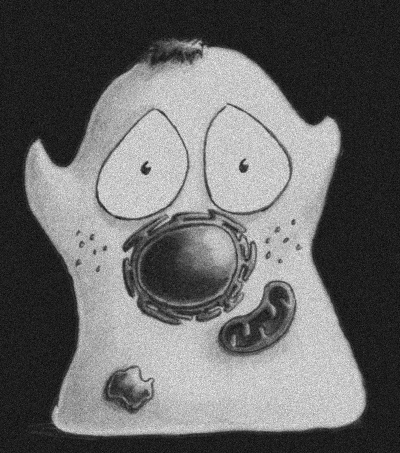

This implies that if we were to average two independent noisy images of the same scene with similar SNRs, we would get a result that contains less noise, i.e. a higher SNR. This is the idea underlying our use of linear filters to reduce noise in Filters, except that rather than using two images we computed our averages by taking other pixels from within the same image (Figures 4).

Poisson noise

In 1898, Ladislaus Bortkiewicz published a book entitled The Law of Small Numbers. Among other things, it included a now-famous analysis of the number of soldiers in different corps of the Prussian cavalry who were killed by being kicked by a horse, measured over a 20-year period. Specifically, he showed that these numbers follows a Poisson distribution.

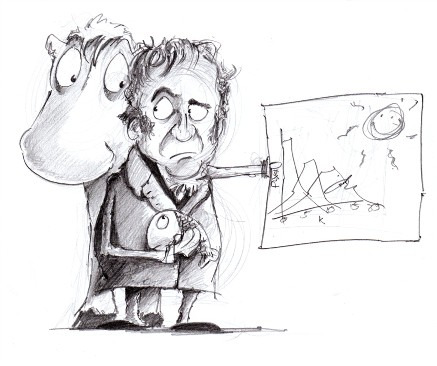

This distribution, introduced by Siméon Denis Poisson in 1838, gives the probability of an event happening a certain number of times, given that we know (1) the average rate at which it occurs, and (2) that all of its occurrences are independent. However, the usefulness of the Poisson distribution extends far beyond gruesome military analysis to many, quite different applications – including the probability of photon emission, which is itself inherently random.

Suppose that, on average, a single photon will be emitted from some part of a fluorescing sample within a particular time interval. The randomness entails that we cannot say for sure what will happen on any one occasion when we look; sometimes one photon will be emitted, sometimes none, sometimes two, occasionally even more. What we are really interested in, therefore, is not precisely how many photons are emitted, which varies, but rather the rate at which they would be emitted under fixed conditions, which is a constant. The difference between the number of photons actually emitted and the true rate of emission is the photon noise. The trouble is that keeping the conditions fixed might not be possible: leaving us with the problem of trying to figure out rates from single, noisy measurements.

Signal-dependent noise

Clearly, since it is a rate that we want, we could get that with more accuracy if we averaged many observations – just like with Gaussian noise, averaging reduces photon noise. Therefore, we can expect smoothing filters to work similarly for both noise types - and they do.

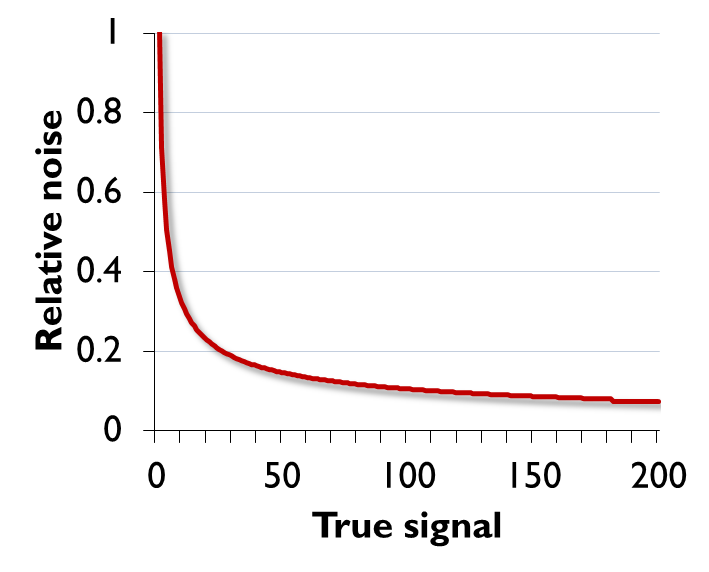

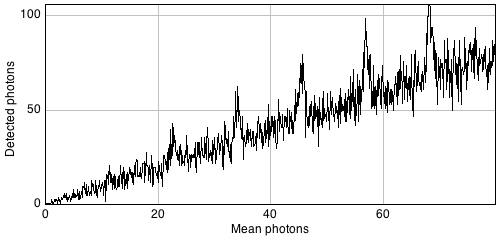

The primary distinction between the noise types, however, is that Poisson noise is signal-dependent, and does change according to the number of emitted (or detected) photons. Fortunately, the relationship is simple: if the rate of photon emission is , the noise variance is also , and the noise standard deviation is .

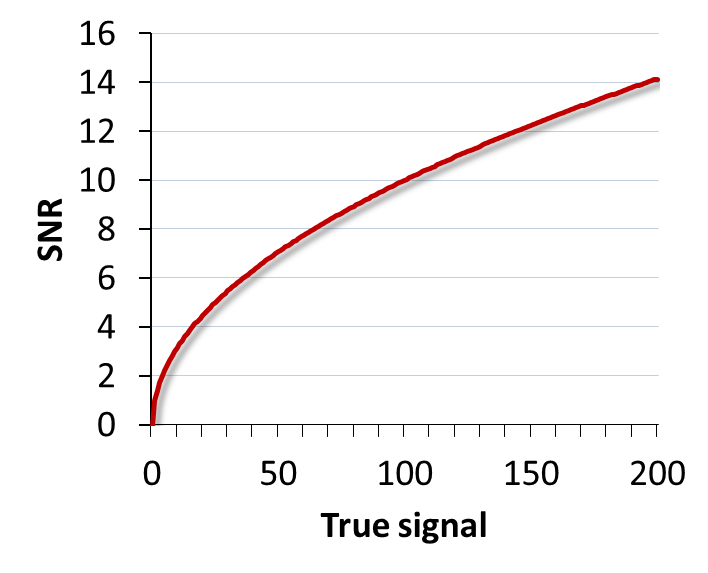

The SNR for Poisson noise

If the standard deviation of noise was the only thing that mattered, this would suggest that we are better not detecting much light: then photon noise is lower. But the SNR is a much more reliable guide. For noise that follows a Poisson distribution this is particularly easy to calculate. Substituting into the formula for the SNR

Therefore the SNR of photon noise is equal to the square root of the signal! This means that as the average number of emitted (and thus detected) photons increases, so too does the SNR. More photons → a better SNR, directly leading to the assertion

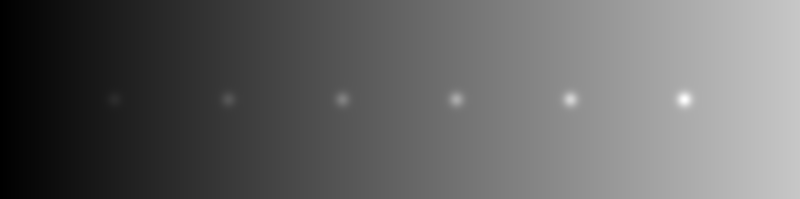

Poisson noise & detection

So why should you care that photon noise is signal-dependent?

One reason is that it can make features of identical sizes and brightnesses easier or harder to detect in an image purely because of the local background. This is illustrated in Figure 9.

In general, if we want to see a fluorescence increase of a fixed number of photons, this is easier to do if the background is very dark. But if the fluorescence increase is defined relative to the background, it will be much easier to identify if the background is high. Either way, when attempting to determine the number of any small structures in an image, for example, we need to remember that the numbers we will be able to detect will be affected by the background nearby. Therefore results obtained from bright and dark regions might not be directly comparable.

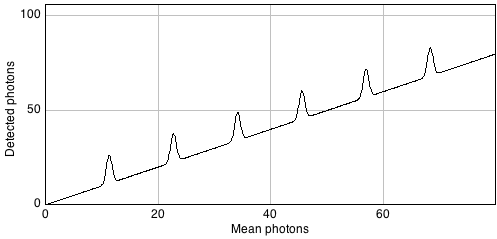

Combining noise sources

Combining our noise sources then, we can imagine an actual pixel value as being the sum of three values: the true rate of photon emission, the photon noise component, and the read noise component[5]. The first of these is what we want, while the latter two are random numbers that may be positive or negative.

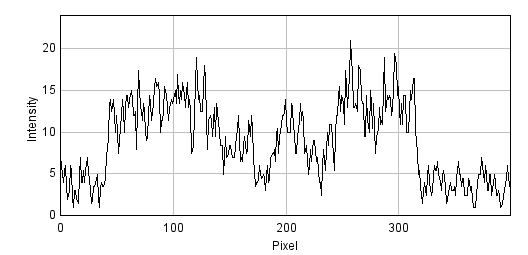

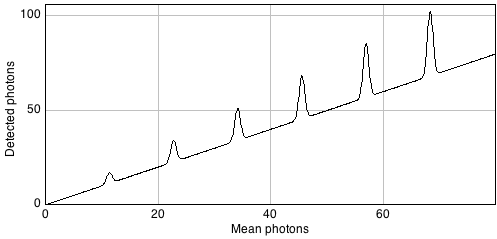

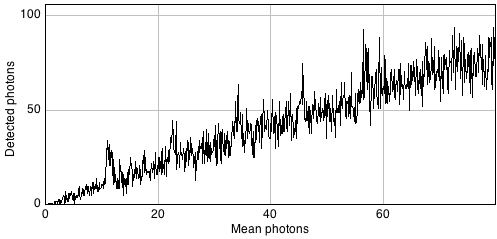

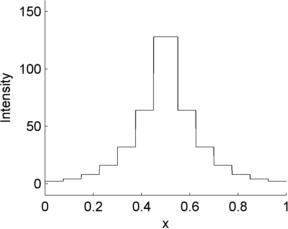

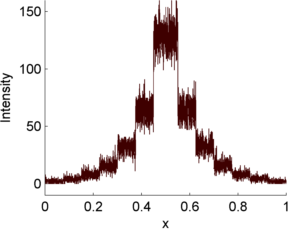

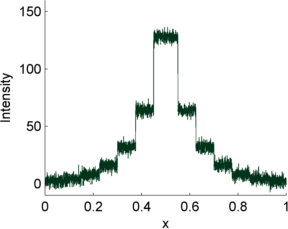

This is illustrated in Figure 10 using a simple 1D signal consisting of a series of steps. Random values are added to this to simulate photon and read noise. Whenever the signal is very low (indicating few photons), the variability in the photon noise is very low (but high relative to the signal! (B)). This variability increases when the signal increases. However, in the read noise case (C), the variability is similar everywhere. When both noise types are combined in (D), the read noise dominates completely when there are few photons, but has very little impact whenever the signal increases. Photon noise has already made detecting relative differences in brightness difficult when there are few photons; with read noise, it can become hopeless.

Therefore overcoming read noise is critical for low-light imaging, and the choice of detector is extremely important (see Microscopes & detectors). But, where possible, detecting more photons is an extremely good thing anyway, since it helps to overcome both types of noise.

Finding photons

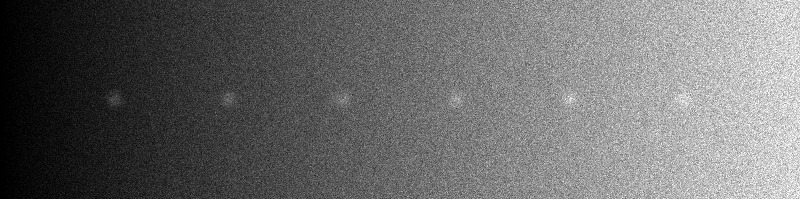

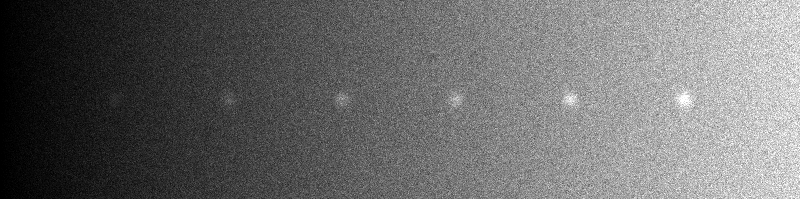

There are various places from which the extra photons required to overcome noise might come. One is to simply acquire images more slowly, spending more time detecting light. If this is too harsh on the sample, it may be possible to record multiple images quickly. If there is little movement between exposures, these images could be added or averaged to get a similar effect (Figure 11).

An alternative would be to increase the pixel size, so that each pixel incorporates photons from larger regions – although clearly this comes at a cost in spatial information.